State over Tokens: Characterizing the Role of Reasoning Tokens

This post is a concise, adapted version of our paper, written by Mosh Levy, Zohar Elyoseph, Shauli Ravfogel, and Yoav Goldberg.

LLMs sometimes generate "reasoning tokens" before an answer. These sequences resemble human thought processes, yet evidence suggests they are often unfaithful as explanations. Metaphors like "Chain-of-Thought" encourage reading them as such. State over Tokens reframes these tokens as externalized computational state; this framing fits both their usefulness for the model and their unreliability as explanations when read as text.

The Vacuum Beyond the Explanation Illusion

Large language models often perform better on reasoning questions—math, logic, complex problem-solving—when they generate intermediate tokens (“reasoning tokens”) before stating their final answer. Because these tokens use first-person language, logical connectors, and apparent self-correction, they invite a natural interpretation as a record of how the model reached the answer; the “Chain-of-Thought” metaphor [1] reinforces that reading.

But a growing body of research shows that this impression can be an illusion. Evidence comes in several forms: models omit critical factors that actually influenced their answers [2,3,4,5,6]; models can arrive at correct answers while producing nonsensical or irrelevant text [7,8,9,10]; and humans cannot reliably predict the actual relations between steps in the text [11] (see the interactive demo at do-you-understand-ai.com). Taken together, these findings suggest that even when the text appears convincing and logical, it may misrepresent the reasoning process the model followed.

If the text is not a reliable explanation, a different description is needed—one that targets its functional role in generation rather than its surface narrative. Rejecting the “Chain-of-Thought” reading leaves a gap: what role do the tokens play in the generative mechanism, and what questions should we ask about that role?

This post describes how LLMs generate tokens and offers a simple way to think about them—State over Tokens—that supplies this missing language. We use it to clear up common misconceptions about step-by-step text and to open up new ways of thinking about its role and implications.

The Whiteboard Thought Experiment

Imagine being in a room with a whiteboard showing a problem—say, a complex math problem. You’re told upfront about a peculiar constraint: every 10 seconds, your memory will completely reset. Everything you figure out will vanish. You’ll forget you even looked at the problem. The only thing that will persist is what’s written on the whiteboard. In each 10-second cycle, you can add a single word to the board before your memory wipes.

Given the constraint, the text on the whiteboard becomes your only lifeline. When you “wake up” each cycle, you read the board to understand where you left off. During those 10 seconds, you might do substantial mental work—exploring approaches, weighing options, running calculations. But you can leave behind only one word to help your future self continue the process. This constraint shapes what gets written.

You might perform several mental calculations before writing down just the key result—so the whiteboard won’t capture every calculation you did. Maybe you write a crucial number, a pivotal insight, or a reminder. You might also use personal shorthand: abbreviations, symbols, or codes that mean something specific to you but look cryptic to outsiders.

Someone watching from outside the room might completely misunderstand what’s written. They might see “CHECK” and assume it means “check the previous step,” when actually that word functions as a private marker—perhaps indicating where to resume after verifying intermediate results. They might see a series of numbers without realizing they encode information that has nothing to do with the question in their direct meaning.

The analogy maps cleanly onto token-by-token generation. The problem is the user input. The words on the whiteboard are the “reasoning tokens” (the technical term for the pieces of text the model generates), the memory wipe after each word reflects that the model doesn’t maintain internal state between words except for these tokens. The 10 seconds of thinking time corresponds to the model’s computational capacity when generating each word.

(Advanced note: To fully capture how decoder-based models work, imagine that before the timer starts each cycle, you re-read the board from the beginning, trying to predict each word. This re-reading reconstructs the mental work that led to each word. Some implementations cache this reconstruction to save effort, but the words on the board fully determine it.)

Under the hood, the LLM generates each token (~word) in a separate, stateless burst of computation. Unlike humans, LLMs have no persistent memory across the generation of each word—in each cycle they make a computation based solely on the input prompt and the text generated so far. This computation is substantial, but it’s fundamentally limited. The model can’t “think longer” to produce a harder word. This makes the text the carrier of information from one step to the next.

Introducing State over Tokens

The whiteboard story illustrates the functional role: the text is a tool for the future self, encoding progress so the process can continue across memory wipes.

Like what’s written on the whiteboard, the tokens play the role of what computer scientists call “state”: the information that captures where a process currently stands and helps determine what happens next. This is an unusual form of state: encoded in language and updated with the addition of one token per cycle.

We offer to call this phenomenon State over Tokens (SoT). This perspective shifts how we think about the tokens: not as explanation to be read, but as state to be decoded.

We use this framework to break down the explanation illusion and then explore questions about this text that are easy to miss. (See the full paper for more implications of our framework.)

Unpacking the Explanation Illusion

We believe the illusion that the text is an explanation rests on two misconceptions. Using the State over Tokens framework, we now unpack both.

Misconception 1: “The text shows all the work”

Return to the whiteboard room. The problem: “What is the 9th Fibonacci number?” The rule is: each number is the sum of the two previous ones. With your repeating memory wipes and one-word-per-cycle constraint, you proceed. After reading the problem, you write “1”. Memory wipes. You wake up, read the board, write another “1”. Memory wipes. You wake up again, see two 1s, compute their sum, write “2”. And so on. Eventually, the board shows: 1, 1, 2, 3, 5, 8, 13, 21, 34.

These numbers are crucial—you can’t arrive at 34 without building through the earlier ones. But does this sequence explain the work in each cycle? It doesn’t show the mental arithmetic, the checking, the work done before each number was written. The board shows intermediate results, not the process. It’s like mistaking the scaffolding for a building. The scaffolding is essential—you can’t build without it—but it’s not the actual construction.

In each cycle, you do invisible work—exploring possibilities, running calculations, weighing options. But you can only leave behind a compressed summary: a single number. The same applies to LLMs. The text shows results that enable the next step—scaffolding that lets the process continue—but it doesn’t have to show the actual computation. We see what the model left for itself, not necessarily how it got there.

Misconception 2: “The words mean what they appear to mean”

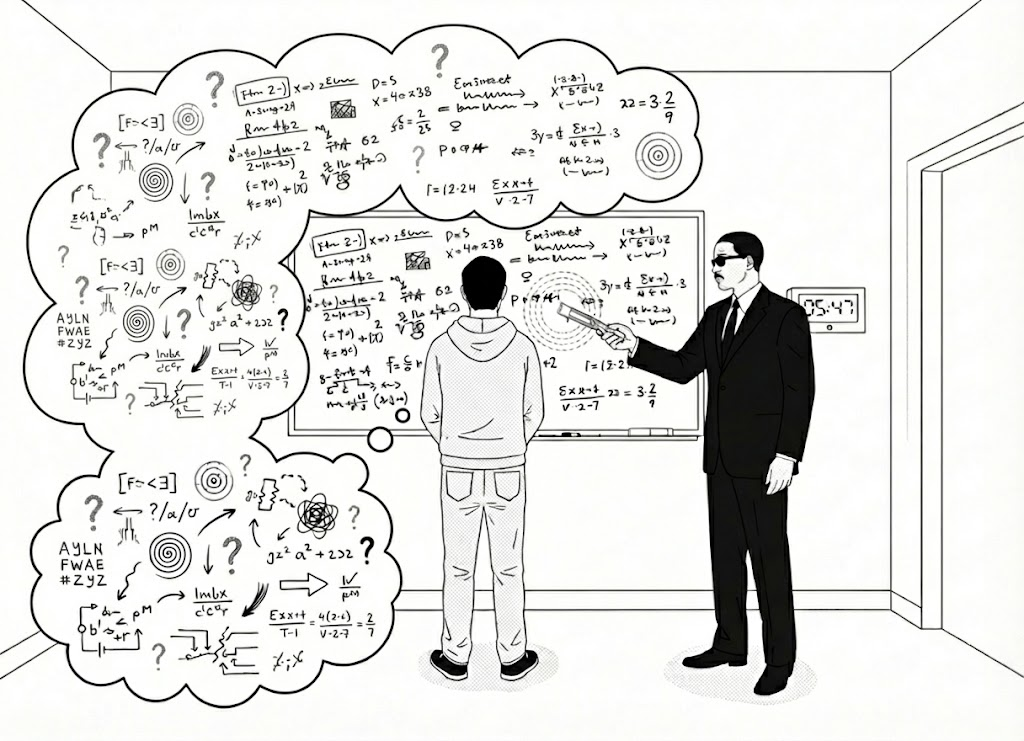

Even if we accept that the whiteboard shows only partial results, we might still assume that outside observers trying to understand your process by reading the board would interpret what appears there the same way you do—and maybe connect the dots to figure out your considerations and calculations. But this assumption is also mistaken.

Imagine the observer sees you write: 11, 11, 12, 13, 15, 18, 23, 31, 44. These aren’t Fibonacci numbers at all.

Suppose you’ve developed a personal encoding scheme. When you wake up each cycle, you read each number, subtract 10, then use the real values to compute the next Fibonacci number. Before writing it down, you add 10. So when the board says “11, 11, 12, 13, 15, 18, 23, 31, 44” you internally decode it as “1, 1, 2, 3, 5, 8, 13, 21, 34” and use those values for your computation. At the final step, you subtract 10 and report the true answer: 34.

This encoding is arbitrary. The surface appearance can completely mislead outside observers trying to understand what you did. But it works perfectly for you. Future-you knows the system: decode, compute, encode, write. Why might you do this? Perhaps it’s a quirk that emerged early and became a habit. Or perhaps when you first entered the room, you noticed the number 11 on the door—and when it came time to write down the first real Fibonacci number (1), you wrote 11 instead, following that association. Or perhaps adding 10 during calculations is something that just feels familiar and reliable to you so you do it. Or perhaps your instructions for producing the 9th Fibonacci number explicitly prohibited writing the Fibonacci sequence on the board, so you chose an encoding that hides it on the surface while keeping it usable for your process. The encoding might have stuck not because of logical necessity, but because it works for you. Existing work on how models can embed information in ways that are hard for humans to detect suggests that real-world encodings can be much more complex than this toy example [12]. What appears on the board may look wrong to observers trying to interpret your process while being perfectly functional for you—the numbers do not mean what they appear to mean.

Now extend this to language. When the model writes “I need to reconsider this approach,” what does that phrase actually do? It might genuinely represent a self-check. But it also might encode something entirely different. Perhaps in the model’s internal “dictionary,” that phrase functions as a marker meaning “branch point ahead” or “store current assumption for later verification”—something that doesn’t match any familiar human mental move. The model writes for itself on its computational whiteboard, potentially using its own encoding system. A phrase that looks meaningful in English might function differently in the model’s process. And a phrase that looks like gibberish to us might be meaningful to the model—encoded in a way we don’t naturally interpret.

This may explain why researchers found that models can produce apparent nonsense and still get correct answers. The text wasn’t nonsense to the model—it was encoded state on its whiteboard. It was only nonsense when read with standard English semantics; we just couldn’t read the encoding.

Why Does It Look Like English?

If these tokens mainly function as state for the model’s process, why do they read like ordinary English? There can be many explanations for that. One possible explanation is that these models are trained on massive amounts of English text. When they generate any tokens—whether it’s an answer to a user or intermediate tokens for their own process—they use the patterns and structures they learned during training. (See the full paper for more discussion on this.)

The same tokens exist as two fundamentally different kinds of things: for human readers, the tokens are text; for the model, the tokens are state. The fact that it reads like coherent English encourages us to interpret it as a transparent explanation, when what matters for the model may be something other than what the words appear to say.

What This Means Going Forward

Thinking of the tokens as a state mechanism encoded in language makes the practical implications clearer.

For anyone using AI systems

The text should not be assumed to explain how the model arrived at its answer. It can increase confidence without providing real justification. This matters especially in high-stakes domains like medicine, law, or finance.

Sometimes, the text will happen to present a valid sequence of steps from question to answer. If you carefully check each step and confirm that the logic holds, it can serve as supporting evidence for trusting the answer. But this confidence should come from your verification, not from the text itself being a direct view into the model’s process.

For AI researchers and developers

This perspective suggests a different way to study the text. Instead of reading it as plain English and assuming we understand what’s happening, we argue that we need to decode it—similar to decoding a compressed file format or reverse-engineering a protocol.

This opens research questions: How do models decide what information to encode at each step? Do they use consistent encoding schemes across different problems? What information is actually embedded in the state at different points? How does information propagate through the sequence?

There’s also a deeper question: Is language special for this purpose? Human language has many years of evolutionary optimization—does something about its structure make it particularly good for encoding computational state? Or could models use other media equally well? Some researchers are exploring alternatives, but whether language has unique advantages remains unclear.

A further question: Could we ever get text that serves both purposes—functioning as computational state for the model and providing faithful explanations to humans? This framework clarifies why that’s difficult: it requires the model to simultaneously perform computation and describe that computation through the same medium. But understanding the challenge is a necessary first step.

The Takeaway

Much discussion about LLMs reasoning abilities focuses on what the text isn’t. But criticism alone leaves a vacuum. If it’s not an explanation, what is it?

State over Tokens: computational state encoded in the token sequence—a functional tool the model creates for itself to maintain a process across multiple steps of limited computation. Not a transparent window into the model’s reasoning process in English terms, but the scaffolding that enables the process to happen at all.

This matters for building more reliable AI systems: understanding the functional role of these tokens might help us design better architectures that use this scaffolding effectively. It matters for responsible deployment: we need to set trust based on what the text actually does, not what it appears to say. Systems that generate elaborate text might not be more trustworthy than systems that don’t—we still need real-world validation rather than accepting the apparent rigor.

For AI safety and governance, this strengthens the argument for not taking the model’s text at face value, and instead investing more effort in methods that probe the actual computations the model runs.

State over Tokens doesn’t settle everything here. But it moves us from criticism to positive characterization—from “it’s not what it looks like” to “here’s what it actually does.” We believe that shift provides a foundation for better interpretability methods and clearer thinking about what AI can and cannot do.

Full paper: https://arxiv.org/abs/2512.12777

References

[1] Wei, J. et al. (2022). "Chain-of-thought prompting elicits reasoning in large language models." Advances in Neural Information Processing Systems.

[2] Turpin, M. et al. (2023). "Language Models Don't Always Say What They Think: Unfaithful Explanations in Chain-of-Thought Prompting." Advances in Neural Information Processing Systems.

[3] Yee, E. et al. (2024). "Dissociation of faithful and unfaithful reasoning in LLMs." arXiv:2405.15092.

[4] Chua, J. & Evans, O. (2025). "Are DeepSeek R1 and other reasoning models more faithful?" arXiv:2501.08156.

[5] Arcuschin, I. et al. (2025). "Chain-of-thought reasoning in the wild is not always faithful." arXiv:2503.08679.

[6] Marioriyad, A. et al. (2025). "Unspoken Hints: Accuracy Without Acknowledgement in LLM Reasoning." arXiv:2509.26041.

[7] Lanham, T. et al. (2023). "Measuring Faithfulness in Chain-of-Thought Reasoning." arXiv:2307.13702.

[8] Chen, Y. et al. (2025). "Reasoning Models Don't Always Say What They Think." arXiv:2505.05410.

[9] Stechly, K. et al. (2025). "Beyond Semantics: The Unreasonable Effectiveness of Reasonless Intermediate Tokens." arXiv:2505.13775.

[10] Bhambri, S. et al. (2025). "Interpretable Traces, Unexpected Outcomes: Investigating the Disconnect in Trace-Based Knowledge Distillation." arXiv:2505.13792.

[11] Levy, M., Elyoseph, Z., & Goldberg, Y. (2025). "Humans Perceive Wrong Narratives from AI Reasoning Texts." arXiv:2508.16599.

[12] Cloud, A. et al. (2025). "Subliminal Learning: Language models transmit behavioral traits via hidden signals in data." arXiv:2507.14805.